Feature Dev is Dead. Long Live Feature Dev

If you've been on tech Twitter this month, you've probably seen the discourse. Dario Amodei, CEO of Anthropic — the company that makes the AI tool half of Silicon Valley now codes with — warned of a "white-collar bloodbath," saying AI could eliminate 50% of entry-level white-collar jobs. Boris Cherny, Anthropic's head of product, told Fortune last week that the software engineer role as we know it might not exist by year's end, and that the transition is going to be "painful for a lot of people." A viral essay called "The 2028 Global Intelligence Crisis" made the rounds predicting a full deflationary spiral triggered by mass white-collar displacement. Citadel Securities published a rebuttal calling it nonsense. Everyone has an opinion. Nobody agrees.

Here's mine: I don't think we're headed for a white-collar apocalypse. I don't think civilization collapses because Claude can write code better than I can. But I do think the transition is going to be ugly — specifically for software developers, and even more specifically for a particular type of software developer. The one who takes tickets and Figma files and turns them into production code. The feature dev.

I know this because for the past 6 months, I've been one. Feature dev is dead, and this week, the company I work for made it official.

Motion is not the first to do this:

- Shopify's CEO told employees they have to prove AI can't do the work before requesting headcount.

- Duolingo declared itself "AI-first" and started phasing out contractors.

- Klarna's CEO bragged about cutting headcount while growing revenue.

- Stripe built autonomous coding agents they call "minions" — which is both adorable and terrifying — that are merging over 1,000 pull requests per week with zero human-written code.

- Ramp built an internal agent that now powers 30% of their merged PRs, and they didn't even mandate it — engineers just started using it because it was faster.

- Block just cut 4,000 people — 40% of the company — and the stock surged 24%.

The company I work for did something similar this week: every pull request must now start with AI. Not encouraged. Not "we think you should try Copilot." Mandated. Effective immediately. Every PR starts with AI or it doesn't get merged.

I'm a feature developer there. So, uh, let me tell you what that's like.

Quick Context on Me

I've spent most of the past decade in ML and AI. Computer vision since 2016, sold a CV company, spent two years as director of engineering on a labs team implementing gen AI across the company. I'm not a feature dev by trade (or havent been for like 8 ish years) — but for reasons that aren't important here, I took a feature dev role six months ago at a company I really like. It's been a front-row seat to everything I'm about to describe — and honestly, when they announced the AI mandate, my first thought wasn't "oh no, my job." It was "finally."

The Old Flow is Broken and Everyone Knows It

Here's how feature development works at most software companies in 2026, and honestly how it's worked for the past decade:

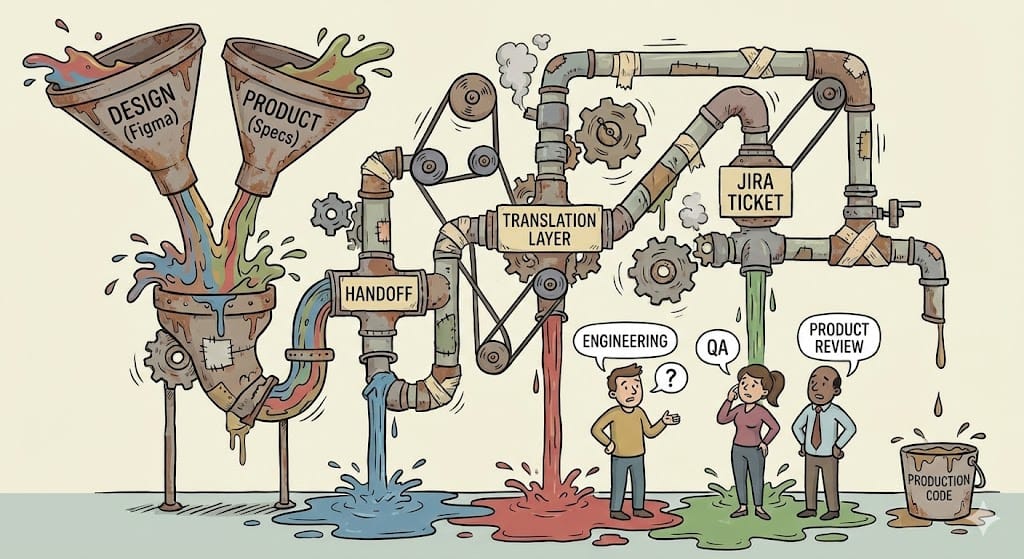

Design makes something in Figma. Product writes a spec. Someone cuts tickets. Engineering picks up the tickets. Engineering builds the thing. Product looks at it. Product doesn't like something. Design weighs in. Everyone argues about the thing. Engineering rebuilds the thing. QA finds bugs. Engineering fixes bugs. Product signs off. Ship it. Repeat forever until heat death of the universe.

Every single handoff in that chain is a translation layer. Design → product is a translation. Product → engineering is a translation. Engineering → product review is a translation back. And at every translation layer, information gets lost, context gets muddled, and someone ends up in a meeting saying "that's not what I meant."

This process has been broken for a long time. We all know it's broken. We've just been living with it because there wasn't a better option. The Agile people tried to fix it. The no-code people tried to fix it. Everyone tried to fix it by making the translations faster or more collaborative. Nobody questioned whether the translations needed to exist at all.

Until now.

What Replaces It

Here's what my company is building, and what I think every software company will be building within 18 months:

Product and design just... build it. Themselves. Directly.

Designer sees something they don't like in production? They don't cut a ticket. They don't schedule a meeting. They don't write a Jira description that an engineer will misinterpret anyway. They tell an AI agent to fix it. The agent opens a pull request. The designer clicks a preview link, checks it, approves it. Done. Shipped. No handoff. No translation layer. No meeting about the meeting about the button.

Product wants a new feature? They describe what they want. An AI agent builds it, pulls from a centralized knowledge base that knows the codebase patterns, the lint rules, the architectural decisions. Product previews the result. If it's wrong, they iterate directly with the agent. No ticket. No sprint planning. No "we'll get to it in Q3."

Engineering doesn't disappear in this model. Engineering shifts to something arguably more important: building the guardrails. Making sure the AI can't break prod. Setting up preview environments. Maintaining the infrastructure. Building the system that makes all of this safe and reliable.

This is not theoretical. My company announced this transition this week. The infrastructure is being built right now. Other companies are doing variations of the same thing. The question isn't whether this happens. It's how fast.

Agentic Engineering, or: How I Learned to Stop Worrying and Love the MCP enabled Knowledge Base

The nerds in the room (welcome) want to know how this actually works. So let's get into it. If you're not technical / dont care - skip.

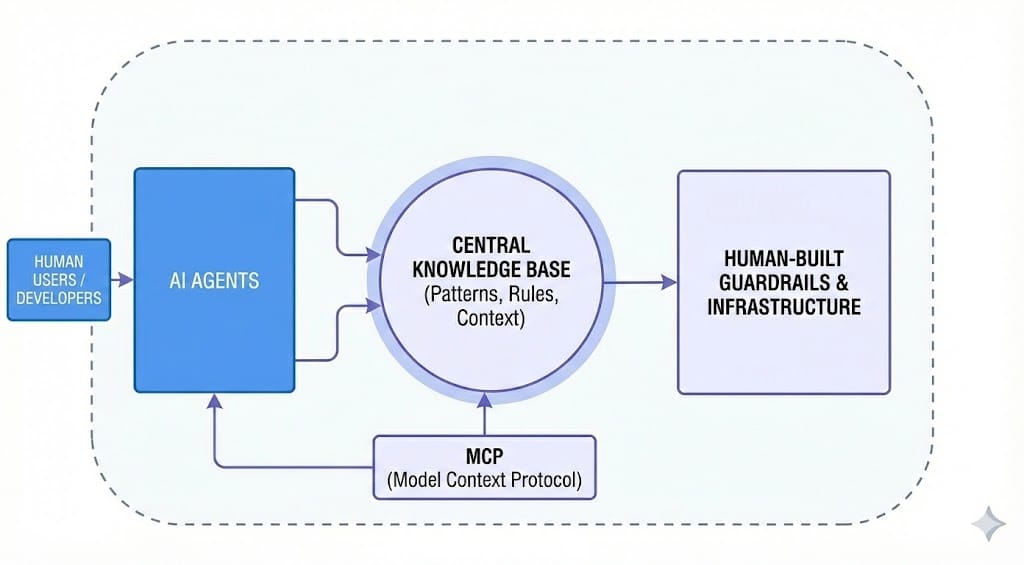

The basic architecture is what people are calling "agentic engineering." You have a knowledge base — think of it as a giant, structured document that contains everything an AI needs to know about your codebase. Patterns, conventions, architectural decisions, lint rules, common pitfalls, golden examples. Literally every engineer's brain extracted into this one place. This knowledge base gets served to AI agents via something called MCP (Model Context Protocol), which is basically a way for AI models to pull context from external sources.

Every engineer connects to this knowledge base through their coding tool of choice — Claude Code, Cursor, whatever. When they (or an AI agent) open a pull request, the agent pulls from this shared knowledge base. Same patterns. Same rules. Same expectations. No more "well, I like to do it this way" cowboy nonsense.

Here's the key insight, and this is the part that most people miss: when the AI gets something wrong, it's your fault, not the AI's fault.

I know, I know. But hear me out. If the AI generates code that doesn't match your patterns, the right response isn't "stupid AI." The right response is "I didn't document that pattern well enough." or "I didn't give the LLM the right context". So you go update the knowledge base. You add the rule. You add the canonical example. And now every agent, across every engineer, across every future PR, gets it right. And in theory - this mistake does NOT happen again.

This is the compounding effect. Every mistake becomes a permanent fix. Every correction propagates everywhere. Over time, the one-shot success rate — the percentage of PRs the AI gets right on the first try — trends up. My company is tracking this metric explicitly. They're also tracking iteration counts, prompts used, chain-of-thought reasoning, which tools were called. Full telemetry on the AI development process.

The stated goal, and I am not exaggerating here, is to eventually make code reviews unnecessary. Not because code reviews are bad, but because the AI already wrote the code exactly the way you told it to. If the rules are good enough, and the guardrails are tight enough, the review already happened — it happened when you wrote the rules.

Will it get to 100%? No. Probably not ever. The "second 80%" is real — getting from 80% to 95% is harder than getting from 0% to 80%. AI still produces scary bugs. Novel patterns need hand-authored canonical examples before agents can extend them. You'll have a rough few months of "who the hell committed this" before the knowledge base fills in the gaps.

But will it get to the high 80s? Maybe the 90s over time? Yeah. I think so. And that's enough to fundamentally change what engineering teams look like.

So Where Do You Go?

If you're sitting in a feature dev chair like me, I'll be direct with you: this job, as currently defined, is not going to exist for much longer. The person who takes tickets and Figma files and translates them into production code — that specific role is getting compressed. Fast.

You have two directions:

Move toward product. Become the person deciding what gets built, not the person translating someone else's decisions into code. If you have good product instincts and you're already the engineer who's always pushing back on specs and suggesting better approaches, this is your lane.

Move toward AI platform and infrastructure. Build the harness. Build the knowledge base. Build the MCP servers. Build the compounding loop. Build the CI/CD guardrails. Build the telemetry. Be the person who makes AI-generated code reliable at scale. This is the plumbing, and plumbers are going to be very, very well paid for a long time.

I land squarely on the infrastructure side (granted, not at this company, but in general). Partly because it maps to what I've been doing for years — ML, computer vision, systems work. But also because I think there's going to be a long tail of companies that take a long time to catch up to where the cutting-edge companies are today. They're going to need people who have already lived through this transition. People who can walk in and say "I've seen how this works, let me build it for you."

That should carry me — and probably most engineers who make this shift — through whatever comes next.

The Optimistic Version

I want to end on this because I think the discourse around AI and jobs is exhausting in its pessimism.

It's already quaint that we used to handwrite every line of code. Like, by hand. Character by character. And that memory is only going to get more distant. But that's not a tragedy. That's progress.

What's actually happening is an expansion, not a contraction. Every product manager becomes a builder. Every designer gets direct control over their vision. Engineering becomes higher-leverage, not obsolete. The total amount of software that can be created goes up dramatically. The number of people who can participate in creating it goes up dramatically. I think products get better too - we could maybe even make them AMAZING. All those little "we don't have time to do that but it would be rad" - you actually can do ALL of them now.

Is there going to be a turbulent transition? Yeah, probably. Some roles will compress before new ones expand. That's real and it sucks for the people in those seats (hi, it me). But I genuinely cannot believe that this massive leap in what humans can create leads to a worse outcome for humanity.

Of course, I acknowledge the risks. There's always the paperclip maximizer that could lead to our collective extinction. But that's a blog post for another day.

Features aren't going away. Software isn't going away. But the way we build it? That is forever changed. And if you're paying attention, you already knew it was coming.

I write about AI, engineering, and building things at the intersection of software and the physical world. If you want to argue about this post, I'm on Twitter/X and at andrewpierno.com.