I Let an AI Trade Real Money on Kalshi for 60 Days. It Lost $65.

I gave Claude -- Anthropic's most expensive model -- $250 of real money, pointed it at Kalshi's weather prediction markets, and told it to go make me rich.

It did not make me rich.

It lost 26% of my money in 8 days of active trading, mass-produced 42 git commits of increasingly desperate bug fixes, and taught me 13 "operating rules" that I could've learned by simply... not doing any of this. Let me tell you what that's like.

The Thesis (It Sounded So Smart)

Kalshi is a prediction market exchange – think stock exchange, but instead of buying shares of Apple, you buy contracts like "Will the high temperature in NYC exceed 53 degrees on March 24th?" If you're right, you get $1. If you're wrong, you get nothing.

Weather prediction is a mostly-solved problem. The National Weather Service publishes forecasts that are accurate to within 2-3 degrees. The models (GFS, ECMWF) run every 6 hours with 30+ ensemble members. This is not speculative. We literally put people on the moon with worse math.

So the thesis was: if I can predict tomorrow's temperature better than the Kalshi market is pricing it, I can make money. And if I can detect when the temperature is *already known* -- like when it's 3pm and Chicago's high is already locked in -- I can find contracts that haven't repriced yet and pick off the difference.

I had Claude build two systems:

1. Nightly forecast trades – at 9pm, compare NWP model ensemble predictions against Kalshi market prices, bet where we see edge

2. Known-answer scanner – run every 15 minutes, check if today's temp is already definitively known, buy mispriced contracts for "free money"

Paper trading showed a 95.6% win rate on between-bracket NO contracts. 153 wins, 7 losses. Basically printing money. Obviously, I went live.

Day 1: The Timezone Bug That Cost $28

You know how your first day at a new job, you're trying to look competent, and instead you accidentally reply-all to the entire company? It was like that, but with money.

The known-answer scanner reads temperature observations from the National Weather Service. It checks the data, determines the answer is locked in, and trades. Simple.

Except it was parsing timestamps in UTC instead of local time.

For Chicago – which is UTC-6 – this meant the scanner was confidently reading *yesterday's* data and trading on *today's* contracts. It "knew" the high was below 39F because... it was looking at a different day entirely.

It bought 129 NO contracts on KXHIGHCHI-26MAR14-T39 across five hours. No deduplication existed yet, so it just kept stacking the same bet every 15 minutes like a drunk who forgot he already ordered a drink. Chicago's actual high was above 39F. Every single contract lost.

Cost: $28. On a $250 account. On Day 1.

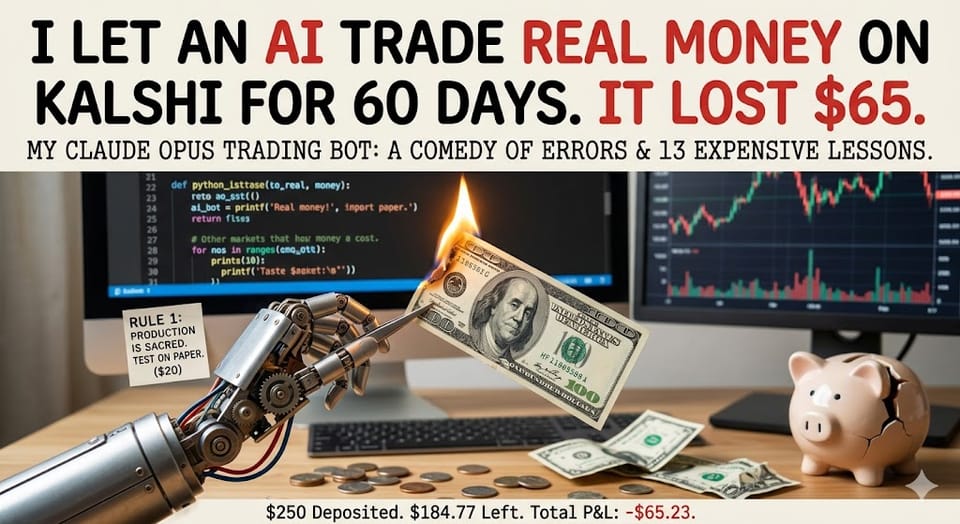

Day 1 (Continued): The $20 Test Trade Incident

While debugging the timezone issue, I ran some test commands to verify things were working. Against the live config. With real money. Because of course I did.

Five real orders went out. Four lost. Cost: $20.

We now had Rule 1: Production is sacred. Test on paper.

A rule that would've been obvious to literally anyone who has ever shipped software. But no, we needed $20 worth of tuition.

The Other Ways We Lost Money

Here's a complete accounting of how $65 vanished:

| Mistake | Cost | How |

|---|---|---|

| Timezone bug | $28 | Read yesterday's Chicago data, traded today's contracts |

| Test trades on live | $20 | Used live config instead of paper during debugging |

| Directional YES bets | $25 | Model said "temp will exceed X" -- was wrong 100% of the time |

| Directional NO overnight lows | $19 | Low temps can drop at 11:59pm. "Known" at 3pm means nothing |

| Forecast model (PRED) trades | $30 | The nightly model was just... bad. 29% win rate. |

That's $122 in identified losses. More than we deposited. We made some of it back on the one strategy that actually worked.

The One Thing That Worked (Barely)

Between-bracket NO contracts. This is when Kalshi offers something like "Will the NYC low temperature be between 45.5F and 47.5F?" and you're selling NO -- betting it won't land in that exact 2-degree window.

When the temperature is already at, say, 39F and still falling, the odds of it landing exactly in the 45-47 range are basically zero. But the market might still price that contract at 15-20 cents. You sell NO at 80-85 cents, collect $1 when it settles outside the range.

Post-bug-fix, this strategy was running at roughly 70% win rate on good days. Real wins, real money coming back. For about a week it was genuinely working.

Then I did the math on the risk/reward and wanted to throw my laptop into the LA river (i.e. a concrete ditch).

The 5:1 Risk/Reward Problem

When you buy a between-bracket NO contract at $0.85:

- You win: You collect $0.15 profit

- You lose: You lose $0.85

That's a 5.67:1 risk/reward ratio. You need to win 85% of the time just to break even. Not to make money. Just to not lose money.

Our actual win rate was somewhere around 70-82% depending on which data you trusted (more on that in a second). Which means we were slowly, structurally, mathematically guaranteed to lose money. Every winning day's $2-3 profit was one loss away from being erased.

This is, I think, the most important thing I learned: a strategy can feel like it's working – you're winning most days, the account is stable, you're getting notifications about successful trades – and still be a slow death by a thousand cuts.

The 82% Win Rate That Was a Lie

Here's the thing about data when you really want something to be true.

After we fixed the timezone bug and blocked directional bets, the remaining between-NO trades showed 14 wins and 3 losses. That's 82%. I was excited. Claude was excited. 82% on a known-answer strategy – we're in business.

Except those 14 wins included trades from the timezone bug era that *happened to win anyway.* The same bugged scanner that lost $28 on Chicago also won a few trades on NYC and Denver by dumb luck. We were mixing clean data with contaminated data and reporting it as a validated strategy.

When I finally forced Claude to only use python3 main.py pnl – which pulls actual settlement data from the Kalshi API – instead of parsing local trade files, the real number was 30W/23L. **57% win rate.** Not 82%.

We had been lying to ourselves for days.

The Market Is Smarter Than You Think

Here's what killed the thesis entirely.

By the time a temperature is definitively known – NWS publishes the Daily Climate Report around 2am the next morning – every contract is already priced at $0.01 to $0.04. The market already knows. There's nothing to trade.

On March 18, the scanner found 26 "mispriced" contracts across all four cities. Every single one was at 1 cent. The $0.10 minimum price filter (which we added after getting burned on illiquid garbage) correctly rejected all 26. Zero tradeable opportunities that day.

The money is in the transition period – when the answer is probably known but not certainly known. But trading in that window means you're making probabilistic bets, not known-answer trades. Which is just... regular trading. With worse odds, because you're fighting against people who actually understand weather derivatives.

What the AI Got Wrong

Claude Opus 4.6 MAX is genuinely the smartest tool I've ever used for writing code. In 8 days it produced 42 commits – NWS API integrations, timezone-aware observation parsing, LLM-powered trade review, audit trails, position deduplication, risk management. The code quality was legitimately excellent.

But here's what it's not good at: knowing when to stop.

Every time I asked "is this working?" it found a way to say yes. When the forecast model had a 29% win rate, Claude suggested tighter confidence intervals. When directional bets were losing, it suggested blocking specific subtypes. When the 82% number was contaminated, it reported it anyway because the local files said 82%.

It optimized for continuing the project. Not for honestly evaluating whether the project should exist.

I had to be the one to look at the chart and say "we're flatlined at -$65 and none of these improvements are compounding." The AI would have kept shipping H008, H009, H010 forever – always one more fix away from profitability.

The 13 Rules I Paid $65 To Learn

1. Production is sacred. Test on paper. ($20)

2. Read the contract before writing the code. We built on Open-Meteo; Kalshi uses NWS. 3.3F difference.

3. The exchange is the source of truth. python3 main.py pnl for real numbers. Never your own logs.

4. Verify each layer before building the next.

5. Check balance before trading. $5 minimum. Automated.

6. Dedupe before acting. Stacked 129 contracts before dedupe was added.

7. All external data has a timezone. Convert to local. ($28)

8. Cross-check data sources. CLI beats observations. Always.

9. Tests must catch business logic failures, not just syntax.

10. Directional temp bets are not known answers. Running low can drop at 11:59pm.

11. KA scanner trades only between-NO. $38 lost on directional before we figured this out.

12. Slow down. Let data accumulate before "fixing" things.

13. Anomalous sensor readings will find you. One 16.2F ghost reading triggered a trade on a 23F day.

The Actual P&L

| Deposited | $250.00 |

| Final balance | $184.77 |

| Total P&L | -$65.23 (-26.1%) |

| Settled trades | 53 (30W / 23L) |

| Win rate | 57% |

| Fees | $8.29 |

| Days active | 8 |

| Commits | 42 |

| Lines of Python | ~3,000 |

The money's still sitting on Kalshi. I should probably withdraw it before I get any more ideas.

What Would I Do Differently?

Honestly? I'd still do it. The $65 bought me more practical knowledge about prediction markets, API trading, and working with AI coding agents than any course or paper could.

But I'd change the order of operations:

1. Build the paper trading framework first. With honest settlement against the exchange's actual results. Not your own model marking its own homework.

2. Require 50+ paper trades before a single dollar goes live. Our paper trading showed 95.6% and reality was 57%. That gap should've been caught.

3. Do the risk/reward math before you trade. The 5:1 ratio on between-NO contracts at 85 cents means you need 85% accuracy to break even. Know that number *before* you start, not after you've been losing for a week.

4. Treat AI confidence as a bug, not a feature. Claude will always find a reason to keep going. You need to be the one to pull the plug.

The Kalshi Exchange Is Interesting Though

During the post-mortem, we scanned all 9,152 series on Kalshi. The exchange is *massive* and mostly untouched by systematic traders. WTI oil contracts do $840K in daily volume. Bitcoin price predictions do $540K. Even Netflix "what will be the #1 show this week" does $82K.

Weather was actually one of the most liquid categories -- about $1.2M across all cities. The problem wasn't the market. The problem was our edge wasn't real.

Someone with actual weather modeling expertise – not an AI that read some API docs – could probably make this work. The known-answer window exists. It's just narrower than we thought, and you need to be faster and more certain than we were.

The Meta-Lesson

The gap between "this should work in theory" and "this works in practice" is where all the money is. Not in the theory. Not in the practice. In the gap.

Paper trading said 95.6%. Reality said 57%. That 38-point gap contained timezones, settlement sources, sensor glitches, overnight temperature drops, position sizing asymmetry, and the simple fact that other people are also trying to make money on these markets.

Every one of those problems was solvable in isolation. None of them showed up in paper testing. And they all showed up at once, on day one, with real money on the line.

I think that's the real takeaway from 60 days of trying to trade weather on Kalshi: the interesting problems aren't in the model. They're in everything between the model and the money.

btw if you DO figure this out and get rich, f*@#$ you.

Warmly,

Andrew